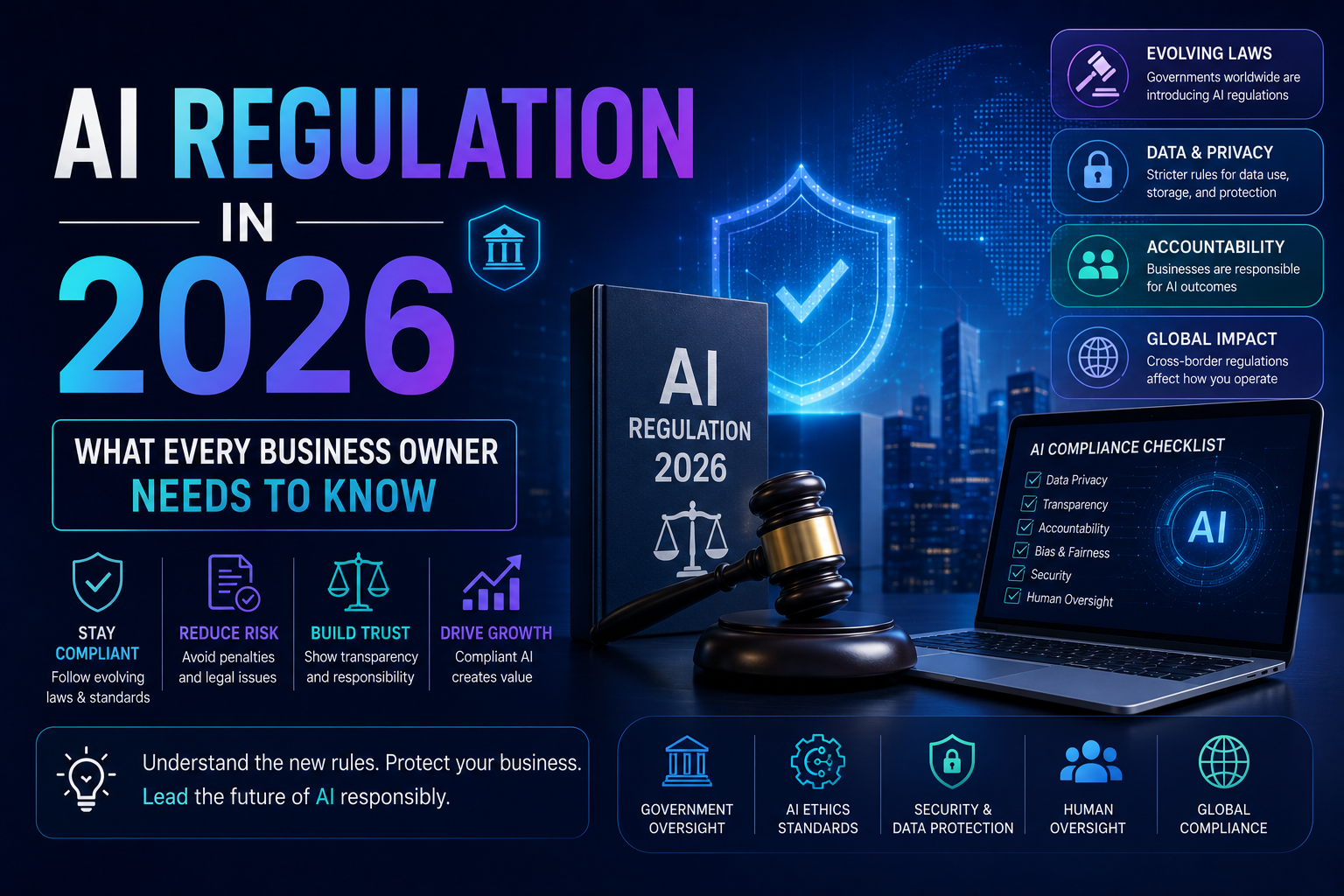

In 2026, AI regulation is no longer optional — it’s a legal reality for businesses worldwide. The EU AI Act is fully in force, the US has issued federal executive orders, and countries like India, China, and the UK are rolling out their own frameworks. If your business uses AI tools — even off-the-shelf software — you likely have compliance obligations. This guide breaks down what changed, what it means for you, and exactly what to do next.

Three years ago, most small business owners shrugged off AI regulation as something that only mattered to big tech companies. Then 2024 happened. The EU AI Act passed. US executive orders started stacking up. And suddenly, business owners who relied on AI-powered tools — chatbots, hiring software, credit scoring systems, customer analytics — found themselves on the wrong side of new rules they never knew existed.

By 2026, the global AI regulatory landscape has shifted dramatically. Whether you run a ten-person startup or a regional franchise, if AI touches any part of your business, you need to know what the law now expects from you. The good news? Getting compliant is not as complicated as it sounds — if you know where to start.

This guide will walk you through the major AI regulations active in 2026, break down what they actually mean in plain English, and give you a practical checklist to protect your business. No legal jargon. No fluff. Just the stuff that matters.

1. The Global AI Regulatory Landscape in 2026

The world did not agree on a single AI rulebook — and it probably never will. Instead, what emerged is a patchwork of regional laws, each with its own priorities, penalties, and timelines. Here is a snapshot of the major frameworks every business owner should know about.

Quick Comparison Table: Major AI Laws in 2026

| Region / Law | Status in 2026 | Who It Affects | Max Penalty |

| EU AI Act | Fully enforced | Any business selling/using AI in the EU | €35M or 7% global revenue |

| US AI Executive Orders | Federal agencies + contractors | US gov vendors & large AI deployers | Contract bans + fines |

| UK AI Framework | Voluntary + sector codes | UK-based businesses using AI in regulated sectors | Sector-specific |

| China AI Rules | Mandatory registration | Generative AI providers operating in China | Service suspension |

| India DPDP + AI Rules | Phased rollout ongoing | Businesses handling Indian citizen data via AI | Up to ₹250 crore |

| Canada AIDA (Bill C-27) | Passed, implementation phase | High-impact AI systems in Canada | CA$25M or 3% revenue |

The important takeaway here is that many of these laws have extraterritorial reach. The EU AI Act, for example, applies to any business that operates in EU member states or whose AI systems affect EU citizens — even if the company is headquartered in India or the US. Ignorance of the law is not a valid defense, and regulators have shown they are serious about enforcement.

The EU AI Act: The Law That Changed Everything

The EU AI Act is the world’s first comprehensive AI law, and it works on a risk-based model. It divides AI systems into four categories: Unacceptable Risk (banned outright), High Risk (heavily regulated), Limited Risk (transparency required), and Minimal Risk (largely unregulated).

- Unacceptable Risk — includes social scoring, real-time mass biometric surveillance, subliminal manipulation. These are banned entirely.

- High Risk — includes AI used in hiring, credit, education, healthcare, and law enforcement. Requires conformity assessments, human oversight, and data governance.

- Limited Risk — chatbots and deepfakes must disclose that users are interacting with AI.

- Minimal Risk — spam filters, AI in video games, etc. No specific obligations.

| 💡 Key Date: As of August 2026, High-Risk AI systems must be registered in the EU’s AI database. If you use AI-powered hiring tools, credit checks, or loan scoring software — this means you. |

2. What ‘High-Risk AI’ Actually Means for Your Business

The phrase ‘high-risk AI’ sounds dramatic, but in practical terms, it covers a surprising number of everyday business tools. If you use any of the following, you may be operating a high-risk AI system — whether you built it or just purchased a SaaS subscription.

- AI resume screeners or applicant tracking systems

- Credit risk or loan approval software with AI components

- AI-driven customer profiling for insurance pricing

- Automated fraud detection systems

- Predictive tools used in education or student assessment

- AI systems used in healthcare triage or diagnostics

The critical thing to understand is that you don’t have to build the AI yourself to be liable. If you deploy a third-party AI system for any of these purposes, the EU AI Act considers you the ‘deployer’ — and deployers have their own set of obligations under the law.

Deployer Obligations Under the EU AI Act

- Conduct a Fundamental Rights Impact Assessment (FRIA) for high-risk systems

- Ensure human oversight is in place — decisions cannot be fully automated

- Maintain logs and records of AI system use

- Inform employees when AI is used to monitor or evaluate them

- Report serious incidents or malfunctions to the relevant national authority

| 💡 Pro Tip: Ask your SaaS vendors for their AI Act compliance documentation. Any reputable vendor serving EU markets should already have this ready. If they don’t — that’s a red flag. |

3. The US AI Regulatory Picture in 2026

The United States has taken a more fragmented approach to AI regulation compared to the EU. Rather than a single comprehensive law, the US has a mix of federal executive orders, sector-specific regulations, and an increasingly active state-level legislative environment.

Federal Level: Executive Orders and Agency Rules

The Biden-era Executive Order on Safe, Secure, and Trustworthy AI (October 2023) set the tone, and subsequent administrations have built on and modified it. By 2026, federal agencies like the FTC, EEOC, and CFPB have each issued AI-specific guidance relevant to their domains. If your business is a federal contractor or vendor, AI transparency and safety requirements are likely already embedded in your contracts.

State-Level AI Laws to Watch

| State | Key AI Law / Regulation | Business Impact |

| California | SB 1047 + CPRA AI amendments | AI safety requirements for large models; data rights for consumers |

| Colorado | SB 205 (Consumer Protections in Interactions with AI Systems) | Transparency + right to explanation for AI decisions |

| Texas | Texas AI in Employment Act | Restrictions on AI in hiring and firing decisions |

| Illinois | AEIA (Artificial Intelligence Video Interview Act) | Consent + notice when AI analyzes job interviews |

| New York City | Local Law 144 | Bias audits required for AI hiring tools used in NYC |

The patchwork nature of US state laws creates real complexity for businesses operating across state lines. A hiring tool that is legal in Texas might require a bias audit in New York City and explicit employee consent in Illinois. Multi-state businesses need a unified AI governance policy that satisfies the strictest applicable standard.

4. The Real Cost of Non-Compliance

Let’s be direct: the penalties for getting AI regulation wrong are not minor. And beyond the financial fines, the reputational damage from a public enforcement action can be far more damaging for a small or mid-sized business.

| Violation Type | Potential Fine | Real-World Example |

| Deploying prohibited AI system (EU) | Up to €35 million or 7% global revenue | Banned biometric surveillance system |

| High-risk AI without conformity assessment | Up to €15 million or 3% revenue | AI hiring tool without required audit |

| Failure to disclose AI chatbot to users | Up to €7.5 million or 1.5% revenue | Customer service bot without disclosure |

| Biased AI hiring tool (US/NYC) | Discrimination lawsuit + regulatory fine | NYC Local Law 144 violations |

Beyond fines, class action lawsuits related to AI discrimination in employment are rising sharply in the US. A single poorly configured AI hiring tool can expose a company to litigation from hundreds of job applicants. The math here is simple: the cost of compliance is almost always less than the cost of a lawsuit.

5. Your AI Compliance Checklist for 2026

The following checklist covers the practical steps every business owner should take. Treat this as a starting framework, not a complete legal audit — complex situations always warrant professional legal counsel.

Step 1: Take Inventory of All AI Tools You Use

You cannot manage what you cannot see. Start by listing every AI-powered tool your business uses — from marketing automation to HR software to customer service chatbots. Include off-the-shelf SaaS products with AI features, not just custom-built systems.

Step 2: Classify Each Tool by Risk Level

Using the EU AI Act risk framework as a guide, categorize each tool. High-risk tools need immediate attention. Limited-risk tools need disclosure mechanisms. Minimal-risk tools need monitoring but no urgent action.

Step 3: Review Vendor Compliance Documentation

For every high-risk tool, request compliance documentation from your vendor. Look for EU AI Act conformity assessments, bias audit reports, data governance policies, and incident response procedures.

Step 4: Update Your Privacy Policy and Terms

If your business uses AI in ways that affect customers — personalization, content moderation, pricing — your privacy policy and terms of service need to disclose this. Transparency is not just good practice; it is a legal requirement in most jurisdictions.

Step 5: Establish Human Oversight Processes

For high-risk decisions — hiring, lending, major customer service outcomes — ensure a human being is reviewing AI recommendations before final decisions are made. Document this process. Regulators want to see proof of meaningful human oversight, not just a rubber-stamp review.

Step 6: Train Your Team

Every employee who uses AI tools or makes decisions influenced by AI should receive basic AI literacy training. They should understand what the tool does, its limitations, and what red flags to look for.

| 💡 Recommended Tool: Tools like OneTrust AI Governance, IBM OpenPages, or Credo AI can help automate much of your AI compliance documentation and audit trail requirements. These platforms are increasingly being adopted by SMBs, not just enterprises. |

6. Tools That Make AI Compliance Easier (Our Recommendations)

Staying compliant does not have to mean hiring a team of lawyers. The right tools can automate much of the heavy lifting — from vendor risk assessments to audit trails. Here are the platforms we recommend most to business owners navigating AI compliance in 2026. (Disclosure: Some links below are affiliate links. We only recommend tools we genuinely believe in.)

🔧 OneTrust AI Governance Platform

OneTrust is the gold standard for privacy and AI governance. Their AI Governance module helps you map your AI systems, classify them by risk, document vendor compliance, and generate audit-ready reports. Best for: mid-sized businesses and enterprises with complex vendor ecosystems. Pricing starts at around $1,200/month.

→ Try OneTrust AI Governance [Affiliate Link]

🔧 Credo AI — AI Risk Management

Credo AI is a purpose-built AI risk and compliance platform designed for teams who need to track, test, and document AI systems without a large compliance team. It integrates with common ML tools and produces compliance-ready reports aligned with the EU AI Act and NIST AI RMF. Great for: tech-savvy SMBs and AI product teams.

→ Try Credo AI Free Trial [Affiliate Link]

🔧 Termly — AI Policy & Privacy Policy Generator

If you need to update your website’s privacy policy and terms to disclose AI use, Termly makes it fast and affordable. Their AI-specific policy templates are already updated for 2026 regulations. Best for: small businesses needing a quick, compliant policy update without a lawyer’s bill. Plans start from $10/month.

→ Create Your AI-Compliant Privacy Policy with Termly [Affiliate Link]

7. Frequently Asked Questions (FAQ)

Q: Does the EU AI Act apply to my small business?

A: If your business operates in or sells to EU markets, yes — it applies regardless of your company size. However, enforcement priorities tend to focus on higher-risk systems, and there are some reduced obligations for smaller providers of certain AI tools. That said, you still need to be aware of what category your AI tools fall into.

Q: I just use ChatGPT for content writing. Am I at risk?

A: Using ChatGPT or similar generative AI tools for content creation is generally classified as Minimal Risk under the EU AI Act. You do not face significant compliance burdens here. The main obligation is transparency if AI-generated content could be mistaken for human-created content — for instance, deepfake videos or AI news articles.

Q: What if my vendor claims their AI tool is compliant — do I still need to check?

A: Yes. As a deployer, you share responsibility for ensuring the AI systems you use comply with applicable law. Vendor claims are a starting point, not a guarantee. Ask for their conformity assessment or third-party audit reports. Your due diligence is your protection.

Q: Is there a grace period for AI Act compliance in 2026?

A: Most grace periods have now expired. Prohibited AI practices have been banned since February 2024. High-risk AI system requirements are being enforced through 2026. General-purpose AI model obligations took effect in August 2025. If you have not started your compliance journey, now is the time.

Q: What is the NIST AI Risk Management Framework and do I need it?

A: The NIST AI RMF is a US-developed voluntary framework for managing AI-related risks. It is not legally mandated for most businesses, but it is increasingly referenced by federal contractors and used as a benchmark by enterprise customers. Adopting it is a smart move if you sell B2B, especially to government or regulated industries.

Q: Can I be sued by employees for using AI in HR decisions?

A: Yes. Employment discrimination lawsuits involving AI-powered HR tools are rising in the US. Several states and cities now mandate bias audits and disclosure when AI is used in hiring or employment decisions. The EEOC has also signaled it will treat AI-related discrimination claims the same as traditional ones.

Final Thoughts: Start Before You Have To

AI regulation in 2026 is not a distant concern — it is a present-day business reality. The businesses that will come out ahead are the ones treating compliance not as a burden, but as a competitive advantage. Customers, investors, and enterprise buyers are increasingly asking about AI governance before signing contracts. Being the business that can say ‘yes, we have an AI governance policy’ is a genuine differentiator.

Start with the checklist in Section 5. Get a clear picture of the AI tools you use. Talk to your vendors. Update your policies. And if your AI use is complex — hire or consult with someone who specializes in AI law. The cost of that advice is always less than the cost of finding out you needed it after a regulatory action.

The rules are here. The enforcement is real. And the opportunity to get ahead of it — right now, while many competitors are still looking the other way — is wide open.

Disclosure: This blog contains affiliate links. If you purchase through these links, we may earn a commission at no additional cost to you. All recommendations are based on genuine editorial judgment. This article is for informational purposes only and does not constitute legal advice. Consult a qualified attorney for legal guidance.